HCI Models, Theories and Frameworks:

Toward a Multidisciplinary Science

by John Carroll

Carroll, J. M. (Ed.) (2003). HCI Models,

Theories and Frameworks: Toward a Multidisciplinary Science.

San Francisco: Morgan Kaufmann publishers. ISBN:

1-55860-808-7.

This collection of tutorial articles is an appropriate survey for the graduate

level student. [DS]

Jump To:

1 2

3 4 5

6 7 8

9 10 11

12 13 14

15

Ch. 1: Introduction

Ch. 2: Design as Applied Perception (visual senses)

Ch. 3: Predictive vs. Descriptive Models, Fitt's Law

Ch. 4: GOMS, KLM, Advanced / Modified GOMS

Ch. 5: Cognitive Dimensions of Notations Framework

Ch. 6: Users' Mental Models

Ch. 7: Information Foraging Theory, Optimal Foraging

Theory, Scatter/Gather (evolutionary)

Ch. 8: Collaborative Technologies / Distributed

Cognition

Ch. 9: Cognitive Work Analysis (CWA)

Ch. 10: Clark's Common Ground Theory (as used in CMC)

Ch. 11: Activity Theory

Ch. 12: CSCW Research (Psychological foundations)

Ch. 13: Ethnography, Situated Action, Ethnomethodology

Ch. 14: Computational Formalisms

Ch. 15: Design Rationale as a Theory

Chapter 1: Introduction

HCI lies between social and behavioral

sciences; computer and information technologies. It is the fastest

growing CS field, and needs a multidisciplinary approach to keep

flourishing.

HCI practitioners: analyze and

design user interfaces, integrate technology to support human activity

-

in the past: WIMP interface,

voice / video interfaces

-

today: better input / display

devices (mobile computing), information visualization (digital

libraries), navigation techniques (virtual environments)

Initially, HCI brought cognitive science

theories to focus on software development

Activity theory brought into HCI

(Marxist foundations), complemented cog. sci.

While a multidisciplinary approach helps

HCI theories, a tradeoff is the fragmentation of HCI (it's very difficult to

make sense of the vast, diverse science of HCI; to synthesize a

comprehensive, coherent methodological framework)

Goal of the book: To 'survey'

the many approaches to HCI; compare / contrast them

- each chapter is a different approach / method

(top)

Chapter 2: Design as Applied

Perception

Much of human intelligence can be

characterized as our ability to recognize patterns

Vision dominates as the primary sense:

engaging 50% of the cortex and more than 70% of all our sensory receptors

Why we care: we have a

fundamental assumption that there is such an entity as the human

visual system

display design - map data

into a visual form that is matched to our perceptual capabilities

In Cognition, humans share a similar

muscular / skeletal structure, but have a highly adaptable neural structure,

which allows for a high degree of adaptation (humans are highly adaptive

machines)

Information Psychophysics

- concerned with how we can see simple elementary information patterns

(paths in graphs, clusters of items, correlated variables, etc.)

- Theory of Affordances (J. J.

Gibson) - a highly relevant theory, saying that affordances are physical properties of the environment that are

directly perceived (before HCI / computers)

- Since a computer screen doesn't have

a physical environment, we use a metaphor - which says

that a good user interface is similar to a real-world situation

- Information Psychophysics can be

seen as a component of a larger set of cognitive models, such as ACT-R

(Adaptive Control of Thought - Rational [Anderson]) and EPIC

(Executive-Process/Interactive Control [Kieras]) which focus on the cognitive processing of sensory information

3 Stage Model of the Visual

System: this happens very fast - our brain's process when we

see stuff

-

Stage 1: Early Vision:

our visual image is analyzed into color, motion and shape

- involves eye optics,

photoreceptors

- use trichromatic system

to see colors- segment into Red / Green / Blue

- use luminance to see

detail / contrast

- the theory of color vision is

that we separate color into red-green, blue-yellow, and black-white

opponent color channels

- Opponent colors explain

why red, green, yellow, blue, white and black are special colors in

all societies, and why we should use these colors first if we need

to color-code things

- Since these colors can't convey

patterns, we use luminance contrast to show detail

- preattentive processing

theory - uses early stage processing, and tells us how to

design things so that they POP-OUT of a cluttered display.

(Color, motion, texture, stereoscopic depth can be preattentively

perceived, and understanding these limitations is critical in making

displays that can be rapidly interpreted)

-

Stage 2: Pattern

Perception: we split what we see into segments: a 2-D image,

pick up edges, etc.

-

we can display data using

patterns that are easy to perceive, which facilitates problem

solving

-

GESTALT psychologists (Wesheimer,

Koffka, Kohler) first theorized of pattern perception:

- Proximity - entities

that are close together are perceptually grouped

- Good Continuity - smooth continuous lines are more readily perceived

- Symmetry - symmetric

objects are more readily perceived

- Similarity - similar

objects are perceptually grouped

- Common Fate - objects

that move together are perceptually grouped

- Common Region - (added later) - objects in enclosed spaces are grouped

- Connectedness -

(added later) - objects connected by continuous contours are

perceived as related

-

In HCI, visual displays can

support creative thinking and problem solving

-

Stage 3: we perceive

objects (infer based on stages 1 & 2) out of what we see

-

working memory -

core of modern models of cognitive processing

-

Structured object

perception (Biederman) - we perceive objects as composed of

simple linked 3-D solid shape primitives, called GEONs. There

is considerable difference between high-level (object perception,

color is secondary) and low-level vision (color perception, etc)

In the above system, feedback loops

can modify what we see. Higher stages can feedback to lower stages to

modify what we see. Lower stages are more robust, while higher stages

involve more inference making.

In the above system, culture makes it

difficult to design by visual patterning since aspects of displays owe their

value to cultural factors (R, G, Y, B are different colors in every

culture). Some cultural aspects are so hard-wired that there's no way

to design around them.

(top)

Chapter 3: Motor Behavior Models

for HCI

Why model motor behaviors? Because

we need to match movement limits, capabilities, and potentials of humans -

to input devices and interaction techniques - on computing systems

A model is a simplification of reality

that we can use to design, evaluate and provide a basis of understanding a

complex behavior of complex artifacts.

Models lie somewhere in a continuum:

analogy / metaphor <-- ? --> mathematical equations

-

Predictive Models: on

the mathematical end

(a.k.a. Engineering Models or Performance Models, such as

Fitt's Law)

-

Descriptive Models: on

the metaphorical end of the spectrum

(Descriptive, such as Guiard's model - provide a

framework / context to think about a problem)

More on Predictive Models:

allow human performance to be

analyzed analytically, avoiding time-consuming experiments

predictions are a-priori, allowing

for hypothetical exploration of a design scenario

Hick-Hyman Law: an example

predictive model, predicts human response time by an equation

Fitt's Law: a

predictive model for human movement (measures accuracy and amplitude of

human movement; computes an index of performance to compare efficiency

of different devices / interfaces)

- drawn from Shannon's theory -

uses electronic noise (accuracy) and electronic signal (amplitude)

to quantify a movement task's index of difficulty (ID) and

predict movement time (MT) to complete a task

- ID / MT = Index of

Performance (IP); now known as Throughput (TP)

- The goal of Fitt's law:

determine which devices / interaction techniques are most

efficient by comparing performance measures

An example:

keystroking on mobile phones: ones with multi-tap vs. ones

with one-key disambiguation (guesses most common word based on key

presses): second is faster based on Fitt's law, although more

error prone

- Fitt's law isn't appropriate for

complex tasks, like measuring typing speed on a qwerty keyboard

(complex task with 2 hands)

Keystroke-Level Model (KLM):

a predictive model for predicting time to do a task by dividing into

sub-tasks:

K = Keystroking

P = Pointing

H = Homing

D = Drawing

M = plus 1 Mental operator

R = plus 1 system Response operator

TExecutive

= tK + tP + tH + tD + tM

– tR

Other Predictive Models: GOMS

(see Ch. 4), Programmable User Model (PUM: Young, Green, Simon

1989)

More on Descriptive Models:

Descriptive Models provide a

framework / context for thinking about a problem

Example: Key-Action Model (KAM):

divides keys on a keyboard into 3 categories: symbol keys,

modifier keys, and executive keys (simple model, organizes

keys into categories)

Three-State Model of Graphical

Input (Buxton): Descriptive model, says computer pointing

devices follow a state transition diagram of 3 states: out of

range, tracking, and dragging (good for analyzing different types of

pointing devices [touchpad, trackpad, mouse, etc]) - resulted in

redesign of touchpad's to be pressure sensitive, so drag state could be

induced easily

Guiard's Model of Bimanual Skill:

studies between-hand division of labor in everyday tasks - see

chart of descriptive model on p. 41

- Features of non-preferred hand:

leads the preferred hand, sets spatial frame of reference for

preferred hand, performs coarse movements

- Features of preferred hand:

follows non-preferred hand, works within spatial frame of reference

(set by non-preferred hand), performs fine movements

Buxton & Myers found that people

prefer to use two hands when not instructed, and this resulted in lower

times in task completion. This had little insight into hand use,

but was one of the first papers in HCI.

Another study (Gibson) of keyboards

found that they are right-hand biased (power keys on right side), but

the Desktop as a whole is left-handed bias: lefties can cash in on

a time savings, because having the mouse to the left of the keyboard

allows for the right hand to use the keypad / power keys WHILE the left

hand operated the mouse (don't have to stop, switch between the two)

(top)

Chapter 4: Information

Processing and Skilled Behavior

GOMS is the primary focus of this

chapter (Goals, Operators, Methods, and Selection rules: a way

to describe a task and calculate the time to complete it)

- Goals: The users' goals:

what does the user want to accomplish with the software, and by when?

- Operators: The actions

that the software allows the user to take. Generally it is a

command such as a button press, menu selection, or a direct-manipulation

action.

- Methods: the sequence of

sub-goals and operators that can be used to accomplish a goal:

such as the sequence of moves necessary to cut and paste text to move it

from one location to another.

- Selection Rules: Giving

the user the personal "choice" (selection) to accomplish the goal in

those times when there is more than one method available to accomplish

the goal or certain task

GOMS applies to situations in which users

will be expected to perform tasks that they already have mastered. It

does not work for tasks being done by novices that are trying out a new

interface design. The knowledge gathered by GOMS reflects what a

skilled person (expert) will do in a seemingly unpredictable situation.

GOMS works for single-user, active systems

where the system changes in unexpected ways or other people -participate in

accomplishing the task. It has been shown to work very well in

analyzing user-paced, passive systems.

GOMS can be used quantitatively and

qualitatively.

- Quantitatively: gives good

predictions of performance time and relative learning time

- Qualitatively: can help design

training programs, help systems and inefficient systems following an

analysis of the results of study

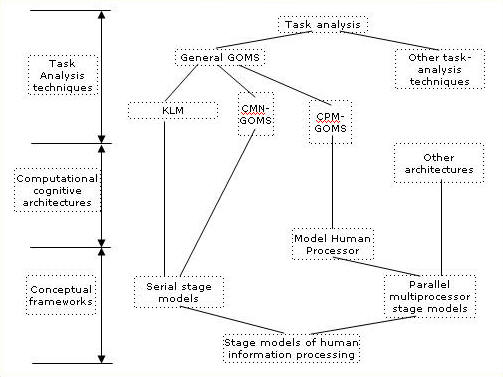

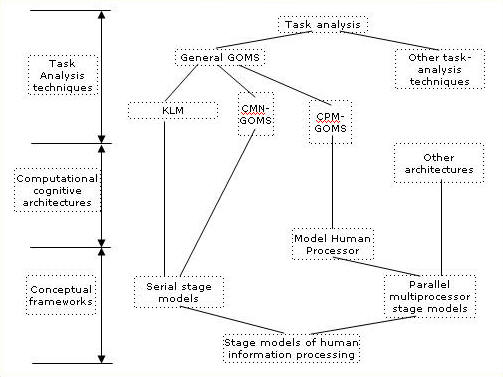

GOMS as a task analysis technique:

stemming from conceptual frameworks on human information processing

(Carroll, p. 65):

Conceptual frameworks -

informally stated assumptions about the structure of human cognition

serial stage models - we process

information in a serial sequence

parallel multiprocessor stage models - we process information like

parallel computing

Computational Cognitive Architectures

- also called unified theories of cognition - are proposals to make

cognition explicit enough to run as a computer program

The Model Human Processor (Card et. al,

1983): ties cognitive science and engineering models:

represents human cognition as a parallel computer. The parallel

processing being done:

-

Cognitive (thinking / thought process)

-

Perceptual (seeing / what we observe)

-

Motor (doing / what physical movements)

In the diagram above, the bottom is more

theoretical while the top is more practical. GOMS is a way designed to

make human information processing something that can be measured

practically.

Task Analysis Techniques -

these map out the system in terms of goals, operators, methods and selection

rules. There are 3 restrictions to what GOMS models can be used for:

-

there must be a way to do the task in

question

-

the task must be able to be 'routinely

done' by a skilled expert

-

the GOMS analyst must start with a list

of top-level tasks or user goals (provided from external sources outside

of GOMS)

GOMS Models:

-

KLM: Keystroke Level Model

(Card, et. al)

-

this is the simplest GOMS:

turns a simple action into a sequence of steps for analysis

-

Time of execution = T(execute) = Tk

+ Tp + Td + Tm + Tr

Operators: K (keystroke); P (pointing);

H (homing); D (drawing); M (mental preparation); R (system response

time)

-

The main advantage is this allows

for a very quick estimate of execution time with very little

theoretical / conceptual baggage. It is the most practical

GOMS technique.

-

CMN-GOMS: Card-Moran-Newell

GOMS

-

named this to differentiate itself

from other GOMS models as they began to appear

-

based on the serial-stage model (see

diagram above), not the Model Human Processor (MHP)

-

slightly more specified than general

GOMS

-

Quantitatively: predicts the

operator sequence and execution time

-

Qualitatively: focuses

attention on methods to accomplish goals. Similar methods are

easy to see. Unusually short or long methods jump out and can

spur design ideas.

-

While the KLM has no explicit goals,

CMN-GOMS does. The output of CMN-GOMS is in program form, so

it is executable.

-

CPM-GOMS: Cognitive,

Perceptual, Motor GOMS (Bonnie John)

-

based on the MHP (Model Human

Processor) - Cognitive, Perceptual and Motor Operators can run in

PARALLEL (subject to resource and information dependencies)

-

based on parallel multiprocessor

stage model of human information processing

-

CPM also stands for Critical Path

Method, since the critical path in a schedule (of parallel

processes) determines the overall execution time.

-

Quantitatively: predictions of

performance times can be read off the chart of CPM-GOMS

-

Qualitatively: analysis of

what portions of a design lead to aspects of the performance are

easy once the models are built

-

as with KLM, selection rules are not

explicitly represented in the chart because the chart is just a

trace of the predicted behavior

-

This is the only model representing

parallelism, so several goals can be achieved at one time, which is

characteristic of expert performance of a task

Human Information Processing

-

this approach can be used to model more

complex human behaviors like problem solving, learning and group

interaction, all of which are critical to designing complex systems

-

some HCI practitioners think of GOMS as

a good tool, many think it is not (too cumbersome / time consuming)

(top)

Chapter 5: Notational Systems-

The Cognitive Dimensions of Notations Framework

http://homepage.ntlworld.com/greenery/workStuff/Papers/introCogDims/index.html

Think of the point of view of the system creators

(practice side, not so theoretical).

System designers try to incorporate new HCI Theories into

their designs, but have learned that some are more practical than others.

They want to use theoretical knowledge, but prefer things like checklists

which often aren't too theoretical. The authors believe that it

will not be possible to deal with these new notational systems by creating

new checklists.

Especially with user testing- many of the methods that

have been suggested such as user testing (laborious, expensive, in

artificial labs) and Predictive models (expert-done

calculations that predict time to complete a task on a finished system)

are very expensive / time consuming to use

HCI has generated several approaches that offer

suggestions for redesign, but they focus on representation and require

extensive, detailed modeling. There is no ONE approach that addresses

all types of activity that can lead to constructive suggestions for

improving the system or device, that avoids details and allows for the

identification of similar problems down the road. In HCI, no one model

is perfect- each one has its own limitations, which is why we should know

about the cognitive dimensions framework.

Alan Blackwell and Thomas Green propose a set of

cognitive dimensions framework that will allow researchers and

designers to 'talk together' (DISCOURSE) about evaluation. It is much

lighter and easier to use than the above two main types of evaluation.

Cognitive Dimensions Framework

- not a method; it is a set of discussion tools; used

by the designers themselves

- attempts to IMPROVE DISCUSSION through a SHARED

VOCABULARY

- main process is to DISCUSS TRADEOFFS of design

decisions among designers by using a SHARED VOCABULARY

- Cognitive Dimensions Framework suggests a handful of

basic 'Notational Dimensions' in the shared vocabulary: VISCOSITY,

HIDDEN DEPENDENCIES , ABSTRACTION LEVEL, PREMATURE COMMITMENT

Notational Dimensions (the SHARED VOCABULARY)

- each dimension describes an aspect of an information structure

that is reasonably general

- Viscosity: resistance to change

- Visibility: ability to view the components

easily

- Premature commitment: constraints on the order

of doing things

- Hidden dependencies: important links between

entities that are not visible

- Role-expressiveness: the purpose of an entity

is readily inferred

- Error Proneness: the notation invites mistakes

and the system gives little protection

- Abstractions: types and availability of

abstraction mechanism

- Secondary notation: extra information in means

other than formal syntax

- Closeness of mapping: closeness of

representation to domain

- Consistency: similar semantics are expressed in

similar syntactic forms

- Diffuseness: verbosity of language

- Hard Mental Operation: high demand on cognitive

resources

- Provisionality: degree of commitment to actions

or marks

- Progressive Evaluation: work-to-date can be

checked at any time

Evaluation using the CD Framework

There are two steps to evaluation using the cognitive

dimensions framework:

- Decide what generic activities a system is desired to

support. Each generic activity has its own requirements in terms

of cognitive dimensions.

- Scrutinize the system and determine how it lies on

each dimension. If the two profiles match, all is well.

Tradeoffs

What is nice about the CD framework is that it eliminates

design maneuvers in which one dimension is 'traded-off' against another.

However, there are certain relationships: such as a way to reduce

viscosity is to introduce abstractions. Abstractions might reduce

viscosity and increase visibility...

Questionnaires

Because CD Framework is so general, it has been used to

structure questionnaires to get user-feedback on certain aspects (notational

dimensions) of a system. Because the notational dimensions are very

general, feedback can be very useful and may be unanticipated by the

designer (a good thing).

(top)

Chapter 6: Users' Mental Models

- stems from cognitive psychology

- why? because understanding users' mental models can enrich our

understanding of the use of cognitive artifacts

Cognitive psychologists think the UMM is

at the heart of understanding HCI- reasoning behind what we do

However, the term is over used in so many different ways that it has lost

its usefulness

Still, mental models is a very important and useful construct, with many

areas that can still be researched

Cognitive psychology foundations to

mental models:

Idea 1: Mental content vs.

cognitive architecture: mental models as theories

-

we use 'bounded rationality' - a

process of using readily-available knowledge to make a decision.

(Note not ALL available information, because we make a quick decision

and don't dig too deep for info)

- cognitive psychologists focus on

the 'structure' (human information-processing architecture) of the

mind more than the 'content' (focus on what an individual knows /

believes) of the mind to explain bounded rationality because

'structure' is more generalizable than content (individual /

incidental).

- while most cognitive psychology

theories focus on structure of the mind rather than content of the

mind (to achieve generalizability). However, in HCI, we need

to focus on singular behaviors (content) as they are a staple of

usability studies, etc. For example, a software 'walkthrough'

will lead to diagnoses about misleading features of an interface

design.

-

users use mental models of familiar

things to construct mental models of unfamiliar things (predicting the

behavior of an ATM is based on other, familiar computer usage)

Idea 2: Models vs. Methods:

mental models as problem spaces

- a characterization of skill: a

collection of methods for achieving tasks

- in cog. psych, solving a problem

involves searching through a mentally constructed problem space of

possible states (a.k.a. mental simulation)

- as we gain skill, we know what

situation calls for what method (less searching / problem solving, more

routine)

Idea 3: Models vs. Descriptions:

Mental models as Homomorphisms

- mental models are 'analog

representations' (share the structure of the world it represents)

- similar to pictures, 'simple static

models' represent the world, with objects / relations

- 'dynamic models' are homomorphisms

(one to many relationships rather than one to one), and can resemble

state-transition networks

Idea 4: Models of Representations:

Mental models can be derived from language, perception or imagination

Idea 5: Mental representations of

representational artifacts

- 'goal space' is the domain of the

users' goals (such as spaces and lines on a paper when we are using a

word processor)

- 'device space' is the problem space

that the user searches for solutions to the goal (such as characters /

operations in a word processor)

Idea 6: Mental models as

computationally equivalent to external representations

- it is possible to have two or more

representations of the same information / task, but these are 'informationally

equivalent' if one is inferable from the other and vice versa

- two or more 'informationally

equivalent' representations may or may not be 'computationally

equivalent', which is the cost of accessing the information / doing the

task

- a 'cognitive map' is a mental

representation that allows users to navigate through an environment

(computationally equivalent to an external map) through process of internalization

Case studies:

What are 'yoked' state spaces?

'yoked state spaces' can motivate an

informal analysis of the fit between the representational capacities of

a device and the purposes of a user (calendar / appointment diary

example)

support the process of

internalization - computationally equivalent mental versions of

external representations

(top)

Chapter 7: Exploring and Finding

Information

How do people forage for information? People are

informavores (George Miller): we are organisms hungry for

information about the world and themselves. This chapter draws much

from evolutionary theory.

Two main concepts of this chapter:

- Information-Foraging Theory

- Information Scent

Information-Foraging Theory

- a metaphor from evolutionary theory: deals with

understanding how user strategies and technologies for information

seeking, gathering, and consumption are adapted to the flux of

information in the environment

- built on notation of hunter / gathering

Information Scent

- concerns the user's use of environmental cues in

judging information sources and navigating through information spaces

Both ideas evolved in reaction to the technological

developments associated with the growth of globally distributed, easily

accessible information, and the theoretical developments associated with the

growing influence of evolutionary theory in the behavioral and social

sciences.

Following the metaphor: What do we do when we hit a

roadblock (metaphorically like hitting a stream, where we loose our

'information scent')? We need to search around to pick up the scent

again- much of the probability of success depends on the interface design

(whether it's good or not- and can lead us in the right direction:

provide clues as to which choice to choose next, eliminate bad paths, etc.)

If we loose track of our 'scent' and accidentally pick up the wrong

scent, we loose trust in the system. Also, how far down the wrong

path (scent) will we go before we realize we are on the wrong path?

Adaptation vs. Exaptation

this chapter's approach sees users as being adaptable.

Users are complex adaptive agents who shape their strategies and

actions to be more efficient and functional with respect to their

information ecology.

Adaptationist approaches became mainstream in the 1980s,

as a reaction to ad-hoc models on human cognition (cognitive &

perceptual tasks). This is in contrast to mechanistic

approaches of the time, such as the MHP (Model Human Processor, Card, et. al

1983)

Adaptationist approaches reverse-engineer the problem-

examining what environmental problems are being solved and why

cognitive and perceptual systems are well adapted to solving them

Exaptation looks at how we as humans are

capable of adapting to solve similar problems (a way of generalizing our

knowledge: we can solve similar but different problems by observing

themes in the problems)

Extending the Information Foraging Theory Metaphor:

- exaptation of food-foraging mechanisms and

strategies of information foraging: natural selection

favored our ancestors- those with better foraging strategies had better

success

- the economics of attention and the cost

structure of information: a wealth of information creates

a poverty of attention- we need to efficiently allocate our attention to

RELEVANT information. Information systems should evolve to provide

more valuable information per unit cost.

- relevance of optimal-foraging theory and

models: optimal foraging from study of animals- who

try to get the most nutritious food with the least amount of effort.

With information, we want the richest, most relevant information in the

least amount of effort

Optimal-Foraging Theory

Goal is to optimize the information we receive to be the

most rich, relevant to our information needs. Optimization models

include three major components:

- Design assumptions: specify the decision

problem to be analyzed (how much time to spend processing a collection

of information)

- Currency assumptions: identify how choices are

evaluated (information value = currency)

- Constraint assumptions: limit / define the

relationships among decision / currency variables (rise out of task

structure / interface / knowledge of population)

In general, all tasks can be analyzed according to the

value of the resource currency returned and costs incurred. Classified

as Resource costs (cost to get it) and Opportunity costs (potential

benefit of it)

Scatter / Gather

- an interaction technique for browsing large

collections of documents

- we cluster documents, then make a cluster hierarchy

- very messy looking interface on p. 170 using this-

bad HCI!

- gather resources, scatter into categories

As Designers: we want to design systems that

provide the richest information, with the least cost to access it (easy

information retrieval) - can be very useful in web design and search engines

Current Status of IFT: Internet Ecology

is being studied- looks at complex global phenomena that yield predictions

on Internet usage and distribution of users over web sites

(top)

Chapter 8: Distributed Cognition

Almost every work situation requires someone working with

other people

HCI hasn't had a way into this problem because cognitive

theory tells us little about social behavior, and social science is hard to

apply directly to design

Ways in which people use tools (artifacts) to support

their goals is poorly understood

Distributed Cognition grew out of a

need to understand how information processing and problem solving could be

understood as being performed across units LARGER than the individual

- cognitive scientists (psychology) don't have to abandon their background

to understand this: we just shift from focusing on 'information in

the head' to 'information in the world' - examine things 'in

the wild'

- how to understand how intelligence is manifested at the systems

level rather than the individual level

Designing Collaborative Technologies

CSCW (Computer Supported Collaborative Work)

shifts the focus of HCI from the user to the group (multiple, codependent

users and their social network)

we focus on the problem solving of the group as a

cognitive problem

we focus on distributed cognition within a context

- drawing on actors and other features within the environment that

allow problem solving (socially distributed cognition)

the goal of analysis: describe how

distributed units are coordinated by analyzing interactions among

individuals, the representational media used, and the environment that the

activity takes place

shift from traditional HCI (micro-structure level) to

macro-structure level (ecological design - a turn to the social)

this has led ethnography - an anthropological

approach to collecting data about the problem domain - to become a central

feature of CSCW (see Ch. 13)

Cognition

- definition: referring to all of the

processes by which the sensory input is transformed, reduced, stored,

recovered, and used

- cognitive science: the problem

solving and the organization of knowledge about the problem domain

- The dominant paradigm for cognition,

Information-Processing Theory, proposes that problem spaces (the

representation of the operations required for a given task) are abstract

representations. There is no reason this theory needs to be

restricted to an individual - any unit performing these activities can

be described as a cognitive entity

- artifact: any man-made or

modified objects (Cole, 1990)

- cognitive artifact: a subset of

artifacts - those that aid cognition ('knowledge in the world' -

Norman; memory aids, etc.). These can increase human potential by

extending our abilities, and can also transform the task itself- which

can allow the user to re-allocate resources for more efficient task

completion

- with distributed cognition, this re-allocation of

resources can take place across the group of individuals, for even more

efficient task completion

- this has led to new theories on "systems

perspective" that focus on describing the features of a system:

the people, artifacts, and the means of organizing these into a

productive unit

- external cognition: described by

Preece as a way of externalizing information (creating and using

information in the world, without performing logic operations in the

head), in order to reduce memory load, to simplify cognitive

effort by computational offloading onto external media, and by

allowing us to trace changes through annotation or by using a new

representational form. This allows humans to logically process

information without performing logic operations in their heads.

- cognitive ethnography: analyzes

the workplace to determine the role of technology and work practice in

system behavior

Distributed Cognition and Computing

- looks to examine the role between the computer and

the social group

- How is information about a domain stored

(represented) in a system?

- functional system: the actors and

artifacts of the system (complex cognitive system / functional unit) -

derived from the activity system (activity theory)

- make use of abstraction to describe a

cognitive system - away from detailed design- focus on the general

functions of the system (nonspecific: what it DOES rather than

what it IS)

- A distributed cognitive system consists of:

(see Fig. 8.2 - p. 207) (note: somewhat resembles MHP)

- sensory mechanism - input from outside the

cognitive system - passes to information processing unit

- action generator - allows production of

output from the cognitive system, can provide feedback to the

information processor

- memory - a stored representational state

to order subsequent activities, receives representations from the

information processor and passes back to it

- information processor - receives

representations from the sensory system and acts upon them:

transforms them, combines them or destroys them

- when a cognitive system is distributed over a number

of individuals, cooperation is required among the individuals in order

to bring their problem-solving resources into conjunction with each

other. This is crucial to their cooperative action

- a problem faced by groups in performing distributed

cognition is the ability to organize a task into component parts that

can be performed by individuals- and then re-assembled at the end

(distribute the load fairly evenly across group members)

- we need to proactively structure distributed

group labor so that effective work can be done

- there needs to be effective communication and

coordination between group members (artifacts with universal

meanings, etc)

- DCog vs. Ethnomethodology: Ethnomethodology

informed ethnography focuses on the ordering of the activity

(coordination), but not on the work itself

Doing DCog: Cognitive Ethnography

Unit of analysis: the functional system

(individuals, artifacts, and their relations)

Look for information-representation transitions that

result in the coordination of activity and computations:

- examine the way that the work environment structures

work practice,

- changes within the representational media,

- the interactions of the individuals with each other,

- the interactions of the individuals with system

artifacts

(top)

Chapter 9: Cognitive Work

Analysis (sometimes called sociotechnical work analysis)

Cognitive engineering - the

analysis, modeling, design and evaluation of complex sociotechnical systems

- first coined by Norman after Three-Mile Island crisis, helped launch the

field (how to design human-machine systems so they are safer / more

reliable)

- goal was to design systems with good user interfaces, so that in

unanticipated situations, the user would be able to safely handle the

physical system

Cognitive Work Analysis (CWA) -

way of analyzing human cognitive work - describes the forces that shape

human cognitive work in complex real-time systems (where humans control

complex physical processes). The goal is to lead to systems that

better support human-operator adaptation when operators are confronted with

unanticipated variability. CWA is a multidisciplinary approach to

cognitive engineering.

Often, the human operator is very

separated from the actual process he is managing, due to a poor interface.

CWA is an approach to cognitive engineering that aims to help find better

ways of connecting the operator with what he is managing (physical system

behind the technology) - so in unexpected situations, adaptive behavior (by

the operator) will be successful.

Ecological Interface Design (EID)

- subset of CWA - a set of principles to guide interface design (influenced

by ecological psychology). Said to be one of the most useful products

of CWA. Makes use of WDA and WCA (see below), as well as some

ecological concepts.

formative model - an approach

that describes the requirements that must be satisfied so that a system can

behave in a new, desired way. CWA is a formative approach to work

analysis.

Phases of Analysis in CWA:

Based on Figure 9.3, p.230

- each narrows down the action possibilities further from the previous

phase

1. Work Domain Analysis (WDA):

find purpose and structure of work domain; often represented in

abstraction-decomposition diagrams and abstraction hierarchies

- constraints (physical & purposive) within which activity takes place

(NOT the activity itself, though)

- often 5 levels of abstraction: functional purpose, abstract

function, generalized function, physical function, and physical form

(see Figure 9.7 on p. 242 for an example)

2. Control Task Analysis (CTA):

find what needs to be done in the work domain so that it can be

effectively controlled; often represented in maps of control task

coordination, decision ladder templates

- continues to narrow down the 'dynamic space of functional action

possibilities' by defining constraints that must be satisfied when work

functions are coordinated over time and when effective control is

exercised over the work domain

3. Strategies Analysis (SA):

find ways that control tasks can be carried out; often represented in

information flow maps

- 'HOW control tasks can be done' is analyzed (we don't care by

whom)

- focus on general classes of strategies and their intrinsic demands

4. Social-Organizational

Analysis (SOA): find who carries out work and how it is

structured; often represented by annotating the information flow maps of

#3

- focus on the division and coordination of work (the content

(information passed between actors)), and the social organization of

the workplace (the form (behavioral protocol of communications))

5. Worker competencies

analysis (WCA): find the kinds of mental processing supported;

often represented by skill-based, rule-based, and knowledge-based

behavior models

CWA was influenced by systems

thinking and ecological psychology. Both emphasize that the

human-environment system needs to be the unit of analysis, with the

environment being a primary unit of analysis in an actor's goal-oriented

behavior.

Systems Theory:

- the whole is more than the sum of its parts (study the whole

environment)

- study the relation between parts, not the properties of the parts

- study cybernetics - open vs. closed systems - and how outside

disturbances affect the system

Ecological Psychology:

- CWA wants to build 'ecological information systems' that can be

operated closer to the ease which the natural world is navigated

- EID (ecological interface design) is an approach to building interfaces

using principles of CWA

- "ecological science rests on the principle that systems in the

natural and social world have evolved to exploit environmental regularities"

- Rosson & Carroll

- ecological psychology is the study of the way organisms perceive

and respond to regularities in information

- key concept #1: environments and information should be

described in terms that reveal their functional significance for an actor

rather than being described in objective actor-free terms

- key concept #2: the affordances of an object are the

possibilities for action with that object, from the point of view of a

particular actor

- key concept #3: the actor uses direct perception, which

proposes that certain information meaningful to an actor is automatically

picked up from the visual array

The CWA System Life Cycle (SLC):

requirements definition, modeling and simulation, tender evaluation,

operator training, system upgrade, and system decommissioning. This

area may see much development in the next decade.

At the end of the chapter, many case

studies are presented. They fall into 2 categories: display

design, and evaluations of human-system integration.

(top)

Chapter 10: Common Ground in

CMC: Clark's Theory of Language Use

HCI has come to encompass technologies that mediate

human-human communication (chat, etc)

Production + Comprehension = Communication

Clark's theory of common ground: we use existing

common ground to develop further common ground and hence to communicate

effectively

- Different types of common ground:

- a proposition is common ground if: all the

people conversing know the proposition, and they all know that

everyone else knows the proposition.

- communal common ground: "we both

speak English. we are professionals. we live in the same

town."

- personal common ground achieved before the

conversation: "our joint purpose is to sign off on the

plan."

- personal common ground developed during the

conversation: "the door on the bedroom that faces south

has to be moved. We can see the bedroom facing south on the

plan. The plan can go to the builder."

- Formalizes collaborative activity as "joint

action"

- language is a joint action involving two or

more people

- Describes the process by which common ground is

developed through joint action

- face to face conversation uses common ground

to reduce the effort required to communicate

- face to face conversation develops common

ground

- face to face conversation involves more than

just words (non-verbal comm, etc.)

- face to face conversation is a joint action

- face to face conversation is "basic" (basis

for understanding language behavior)

Grounding: process of making sure that

another person sufficiently understands you. If not- use grounding.

Often facial expressions (non-verbal behavior) or questioning serves to

notify us if other person needs more information (grounding)

Summary: Clark's theory of language use is

applicable to CMC (computer mediated communication). The usage /

application of this theory to designing systems will be more evident as time

goes on- and will likely be very useful to new systems supporting video

conferencing, asynchronous and synchronous communication, etc.

However, a very useful set of guidelines based on this has yet to be

developed.

(top)

Chapter 11: Activity Theory

History / Foundation for Activity Theory:

A basic perspective on HCI that came from Scandinavian

roots in the early 1980's

an 'Action Research Approach' that focused on active

cooperation between researchers and 'those being researched' - researchers

entered into an active commitment to improve the situation of those they

researched

roots in social psychology, industrial sociology and

critical psychology. Influence by the introduction of the personal

computer, moving away from the mainframe.

There were many problems with existing HCI and system

design at the time. Much of the system design being done at the time

consisted of heavy task analysis, with consideration of a generic novice

user working alone. A new theoretical foundation was needed!

Foundations of Activity Theory:

- analysis and design for a particular work practice

with concern for qualifications, work environment, division of work, and

so on;

- analysis and design with focus on actual use and the

complexity of multi-user activity. In particular, the notion of

the artifact as mediator of human activity is essential;

- focus on the development of expertise and of use in

general;

- active user participation in design, and focus on use

as part of design.

Activity theory shares with ecological psychology the

attempt to move away from the separation between human cognition and human

action, and an interest in actual material conditions of human acting.

Activity theory differs by adding a notion of motivation. Activity

theory values hierarchical analysis, task analysis, etc. Activity

theory takes place on all levels at the same time, not in sequence (see

below). Activity theory moves away from the generic user.

Unit of analysis in Activity Theory:

motivated activity. This is mediated by socially produced artifacts

(tools, language, representations, etc.)

Activity is mediated through a computer or other tool

Activity theory does not assume a boundary between

internal representations and external representations, like cognitive

science. Activity theory has a basic feature of unifying consciousness

and activity.

Development: The most distinct

feature of Activity Theory is development. When compared to other

materialist accounts in computer science, the focus is on development.

Nardi's Five Principles of Activity Theory:

- object-orientedness

- hierarchical structure of activity

- internalization and externalization

- mediation

- development

Mediation is folded in with each of the other four

principles, resulting in four categories of concerns:

- means / ends

- environment

- learning / cognition / articulation

- development

Focus and Focus Shifts:

Activity theory starts with a perspective / point of view

- which yields the objects to work with. However, the focus shifts

that indicate the dynamics of the situation are the main point of concern in

the analysis.

(see Index page for more on Focus

Shifts)

Summary:

Nardi suggests that activity theory is a powerful

descriptive tool rather than a predictive theory. Carroll somewhat

disagrees- because in this chapter concrete techniques were presented that

show how it can be used to focus on computer-mediated activity (a.k.a. HCI)

(top)

Chapter 12: Applying Social

Psychology Theory to Group Work

Study _______________________

/

/|

Approach/

/ |

/

/ |

Build

/______________________/ |

|

| |

Technical |

| |

Infrastructure |

| |

F| Variations

| |

Architecture O| in CSCW

| |

C| research

| |

Application U|

| |

S|

| |

Task |

| /

|

| /

People |

| /

|______________________|/

Group Size

Individual ... Group ... Society

CSCW (Computer Supported Collaborative Work) is a

subfield of HCI that aims to build tools to help group work, learning and

playing. It examines how groups incorporate tools into their routines

and the impact of CMC on group processes and outcomes.

Two main goals of CSCW:

- to support distributed groups (distributed groups are

typically not as effective as collocated members)

- to make collocated groups more effective (CSCW

systems are designed to ameliorate some of Steiner's process loss

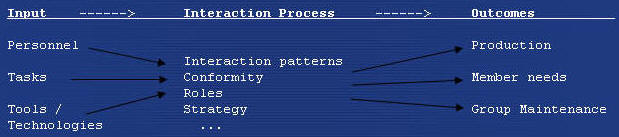

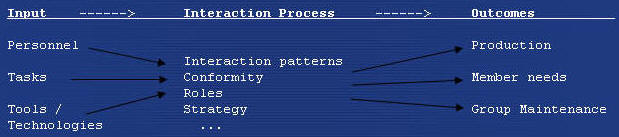

Elements of an input-process-output model of groups:

Production Outcomes:

multidimensional

-

groups do better than individuals

because of aggregation (different people contribute to the

groups with unique resources

-

groups do better than individuals

because of synergy (the increase in effectiveness that comes

about from joint action and cooperation

-

group maintenance and member

support: groups need to have the capability to work together

in the future (group maintenance) and support the needs of its

individual members (member support)

Inputs: both inputs and

processes that group members use will determine the success of the group

-

personnel: diversity

can be a mixed blessing - more viewpoints, but more arguments

-

task: McGrath states

that tasks can be: generative (e.g. brainstorming);

intellective (e.g. correct answers); problem-solving (e.g. open

ended ?'s). Groups in knowledge work tend to be as good as the

second best person and follow a 'trust supported ' heuristic... a

process of aggregation and synergy

-

technologies: groups

will be more effective if they have more qualified personnel and

appropriate technology (applies to collocated and distributed

groups)

Interaction Process: the

way group members interact can directly influence group outcomes and

mediate the impact of inputs on the group.

-

focus is on communication (takes

time away from group production) and can be characterized according

to volume, content, structure and interactive features

-

the right volume, content, structure

and interactive features depends on the particular task

-

uncertainty is a key feature that

determines whether a particular technology will be appropriate

-

technologies restricting

communication are less acceptable if tasks are more uncertain

Process Losses

-

Steiner: being in a group degrades

performance from what the members could be producing individually

-

can be caused by mis-coordination

(production blocking, schedule conflicts, misaligned goals, etc.)

-

can be caused by reductions in

motivation (slackers, not present for goal setting, being around

other slackers, social loafing)

-

production blocking is when

people cannot get work done because they are busy listening and

participating with other group members

-

social loafing is when people

think their efforts are being pooled with the efforts of other group

members. People tend to work harder individually or when they

realize their contributions to the group are unique, or if they like the

group (it's attractive / valuable)

-

social pressure (such as

evaluation apprehension) can cause production losses. A way to

lighten this is to introduce anonymity, but this must be considered

carefully (a set of trade-offs)

Anonymity: a way to offset

social pressures, but can cause social loafing (trade-off)

CSCW researchers turn to the social-science

literature outside their own field, and often consult this- such as

ethnographic research- than experimental social psychology

Carroll says that CSCW research has been

underexploited- a lot due to mismatching goals and values between HCI/CSCW

research and social psychology research

social psychology has provided a rich body

of research and theory about principles of human behavior, which should be

applied to the design of HCI applications- especially those supporting

multiple individuals who are communicating or performing a collaborative

task

(top)

Chapter 13: Studies of Work in

HCI

Jonathan Grudin argued in 1990 that HCI was passing from

the 4th stage to 5th stage: from "a dialog with the user" to a "focus

on the work setting"

This leads into the topics of this chapter, which get

into ethnography, situated action, and ethnomethodology.

Ethnomethodology

- predominated by the emergent HCI concerns with the

workplace

- utilizes an ethnographic / fieldwork approach

- is concerned with the analysis of work and the

workplace

- ethnomethodology & conversation analysis

Many factors precipitated the adoption of CSCW:

- Lucy Suchman's "Plans and Situated Action" - move

from the individual user to a computer placed in a social context.

Attacked many cognitive scientists that failed to take into account the

social and cultural world. She undermined the idea that we 'plan'

what we're going to do- replacing it with an idea that action takes

place within sociocultural contingencies that cannot be covered by a

plan (owes much to ethnomethodology)

- Suchman demonstrated that work could be studied as

part of the process of designing systems for the workplace (draws from

ethnomethodology within sociology and conversation analysis)

- the Scandinavian Participatory Design movement:

led to the involvement of the workers in the design process, and

emphasized: the flexibility of work activities, and that work is

'accomplished' rather than a 'mechanical process' (Activity Theory?)

Overview: A Paradigmatic Case

- there are a number of ways in which

ethnomethodologically grounded studies of work have been applied to HCI.

One way is to analyze the impact that a system has on the work that is

done in the setting into which it is introduced

- the study of work analyzes the methods through which

a domain of work is organized by those who are party to it from the

inside, and makes clear that modeling a workflow using formal processes

as a resource only partially grasps the work that a system is meant to

support.

- studying work reveals a domain of work practices and

methods that are crucial for the efficient running of an organization

(characterized as "hidden" work of organizations)

- often, this 'hidden' work needs to be revealed for a

successful analysis and design

Scientific Foundations

Ethnography

- theorize work

- empirical

- labor process theory (describe work as the

reproduction of capital labor- not looking at the content of work.

serves as a structural orientation)

- an Interactionist approach: attempts to develop

an understanding of work from the inside (the actual workplace)

- Studies of work within HCI and CSCW tend to employ an

ethnographic approach. (Anderson: ethnography in HCI has

really stood for "fieldwork" grounded in ethnomethodology)

Situated Action

- Suchman: human action takes place within

contingencies. Contingencies are not able to project in detail how

any one conversation may unfold before participants engage in the course

of social interaction. Social actions are situated within

arrangements and interaction.

- Social action should be analyzed within the

situations in which it occurs in order to find how a course of action

has been put together

Ethnomethodology

- primary concern is with social order

- social order is essential: people display their

orientation to the ordered properties of actions and interaction, and

use the orderly properties when carrying out their social conduct

- members' phenomena: order is to be found; order

is a matter for ordinary members of the society

- members' accomplishment: order is the product

of consensus brought about by reciprocally shared normative values and

rules, where Marxist sociology has showed that order is the product of

constraint where the people are subject to the power if a coercive state

exercised over them by it's institutions

- Ethnomethodology has explored the in situ

production of social order through two broad domains of interest:

Conversation Analysis and Ethnomethodological Studies of work.

Both are work methodologies.

Conversation Analysis

Turn taking in organization is organized by

participants on a moment-by-moment basis. Turn taking is an

interactional phenomenon, relating to multiple participants and

organized as a collaborative matter.

Conversation analysis has developed as the study of

turn taking in conversation. It underpins some of the ways to

study work, such as in the development of natural language interfaces.

Ethnomethodological Studies of Work

The second major preoccupation of ethnomethodology is

its interest in work. Ethnomethodological studies of work attempt

to examine domains of work in order to understand what are the

particular constitutive features of work, and how in their actions with

one another, people are recognizably engaged in doing their work.

Critique:

- work is not organized as 'rule following' but rather

in the contingencies and improvisations of applying rules

- Suchman critiqued a workflow system based on

speech acts (Searle)- said that conversational analysis can

underscore the situated and unfolding character of conversational

exchanges

Summary:

In the past 10 years, there has been a shift in HCI from

the user to the social world (work setting) in systems design. The

door has been opened to study the work setting, and information being

gathered from the work place will allow us to design better systems.

(top)

Chapter 14: Upside-Down

Algorithms - Computational Formalisms & Theory

This chapter is about how to gain insight into the

context of HCI design and evaluation by using theoretical concepts

(computational theory) and methods (formal methods). We must

understand our raw material (the computer itself) and that drawings,

sketches, etc. are part of the design process.

Formalism is about being able to represent things in such

a way that the representation can be analyzed and manipulated without regard

to the meaning. An example of this is State Transition Networks.

Key Features of Formal Descriptions:

- formal analysis: possible to ask questions

about the system from the formal description

- early analysis: analyze early by using rapid

prototypes, etc

- lack of bias: formal analysis helps to break

biases

- alternative perspective: different

representations allow us to see different things about a design,

providing more views of the artifact during design

- forcing design decisions: using formal

representation, the designer is forced to make user-interface decisions

explicitly and communicate those decisions to the implementer

Detailed Description:

- Fitt's Law shows that no matter what the size or

number of the screen buttons - that a reasonable typing speed is always

faster (3x faster than mouse clicking)

- Touch screens: large targets are better

- Per screen cost: more items per screen the

better... ???

- for small targets, small numbers of well explained

items may be better

Reasons for using Formal methods in HCI:

- to analyze the system to assess potential usability

measures or problems

- to describe the system in sufficient detail so that

the implemented system is what the designer intends

- the process of specification forces the designer to

think about the system clearly and to consider issues that would be

missed

Formal Modeling can be done for single user systems or

for cooperative work

Case study: flowcharts of the human-computer

dialogue: work well because they are simple formal methods.

Why:

- useful: addresses a real problem

- appropriate: no more detail than needed

- communication: mini pics and clear flow

are easy to walk through with client

- complementary: different paradigm than

implementation

- fast pay-back: quicker to produce

application

- responsive: rapid turnaround of changes

- reliable: clear code is less prone to

errors

- quality: easy to establish test cycle

- maintenance: easy to relate bug /

enhancement reports to specification and code

Summary:

There once was a time when every computer method had to

be formal to be respectable. However, formal models bred problems over

time, and grew to the point where they were written off. However, we

should not write off formal methods - look at how successful UML diagramming

has been. Often using a formal model can be beneficial- especially

those that projects simple in nature.

The web is an example of a rapidly growing distributed

system. Things like bookmarks and the 'back' button are stupid- it

restarts the application in the middle of a process!

The problem and challenge of formal methods:

whenever we capture the complexity of the real world in formal structures,

whether language, social structures, or computer systems, we are creating

discrete tokens for continuous and fluid phenomena. In doing so, we

are bound to have difficulty (it is impossible to capture everything, in

minute detail to a program, process, etc.). However, it is only in

doing these things that we can come to understand, to have valid discourse,

and to design. (think of Formal Models as 'naive physics' - as

simple models that can work quickly / easily, but do not accurately

represent reality)

(top)

Chapter 15: Design Rationale as

Theory

This chapter aims to show how reflective HCI design

practices (involving design-rationale documentation and analysis) can be

used to:

- closely couple theoretical concepts and methods with

the designed artifacts that instantiate them

- to more closely integrate theory application and

theory development in design work

- to more broadly integrate the insights of different

technical theories

Design rationale contributes to theory development in HCI

in three ways:

- it provides a foundation for ecological science in

HCI by describing the decisions and implicit causal relationships

embodied in HCI artifacts

- it provides a foundation for action science in HCI by

integrating activities directed at description and understanding with

those directed at design and development

- it provides a framework for a synthetic science of

HCI in which the insights and predictions of diverse technical theories

can be integrated

Design Rationale

- is documentation and analysis of specific designs in

use

- describes the features of a design, the intended and

possible use of those features, and the potential consequences of the

use for people and their tasks

- this involves observing or hypothesizing scenarios of

user interaction, and describing their underlying design tradeoffs

- helps to make theory more applicable by codifying the

terms and relations of the application domain, and grounding them in

design tradeoffs and decisions

Applying theory in HCI design involves mapping concepts

across domain boundaries, and directing descriptions and analysis to

prescriptions for intervention.

TAF: Task-Artifact Framework

- design rationale is created to guide and understand

the impacts of computing technologies on human behavior

- Tasks analysis is expressed as scenarios of use.

The scenarios include the activity context, the actors' motivations,

actions and reactions during the episode

- Claims analysis produces a causal analysis of

the actors' experience, enumerating the features of a system-in-use that

are hypothesized to have upsides and downsides for the actors.

- The design rationale for a system is built up through

analysis of multiple scenarios, often over incremental versions, leading

to a network of overlapping claims.

- Book exemplifies these through MOOsburg example - p.

434

Design Rationale: Three scientific foundations:

- Ecological science: rests on the principle that

systems in the natural and social world have evolved to exploit

environmental regularities.

- HCI can be developed as an ecological science at

three levels:

1. taxonomic science

2. design science

3. evolutionary science

- Action science: a principle to research that

closely couples the development of knowledge and the application of that

knowledge. Integrates the traditional scientific objectives of

analysis and explanation with the engineering objective of melioration.

- Synthetic science: the design rationale that

surfaced during a design project can be grounded in existing scientific

theory, or can instantiate predictions that would extend existing theory

Detailed Description:

- the most important step in constructing a design

rationale is to identify a collection of typical and critical scenarios

of user interaction

- Methods for identifying scenarios:

- carry out the work that a system is intended to

support

- carry out the work that collaborative workshops

with users envisioning alternative ways

- draw on prior work or case-study reports of other

design work

- instantiate general types of interaction

scenarios in the current domain

- transform existing scenarios

- Methods to recognize claims (tradeoffs) in scenarios

- Text analysis: raise questions about the

actions, events, goals, and experiences depicted in the scenario,

and jointly analyze scenarios with users

Case Study: MOOsburg

The design rationale associated with an interactive

system can be evaluated and refined. In the case of MOOsburg,

prototypes are still being developed, analyzed, used and refined.

When a design rationale is generalized, the hypothesized

causal regularities contribute to theory building. Design rationale

supports ecological science at three levels:

- supports taxonomic science by surveying and

documenting causal regularities in the usage situation

- supports design-based science through abstractions

that enable knowledge accumulation and application

- supports the development of evolutionary science by

promoting insights and development of new features and new situations

When the generalized rationale is grounded in established

scientific theory, it serves as an integrative frame within which to

understand and further investigate competing or complementary concerns

(synthetic science)

In general, scenarios and design rationale specify a

shareable design space that can be used to raise, discuss, and arbitrate

widely varying theoretical prediction and concerns.

(top)

Jump to Chapter:

1 2 3 4

5 6 7 8

9 10 11

12 13 14

15